When looking at options for replacing Worker Roles in Azure Cloud Services, more and more, Azure Functions look to fit the bill.

I like the idea of just writing code that focuses on what needs to be done and not having to write plumbing code like polling a queue, handling parallel execution or dealing with poison messages.

With Azure Functions, you can trigger your code using blobs, event hubs, queues, timers and web hooks.

Now that Azure Functions can run on Linux and in a Docker container, we can deploy it however we want.

The idea is this:

– Use Azure Functions as the base image of my workers

– Each worker will handle one message type

– Create a Docker image for each worker

– Deploy them to a Kubernetes cluster so they can scale independently

To explore this idea, we’re going to build a small proof of concept.

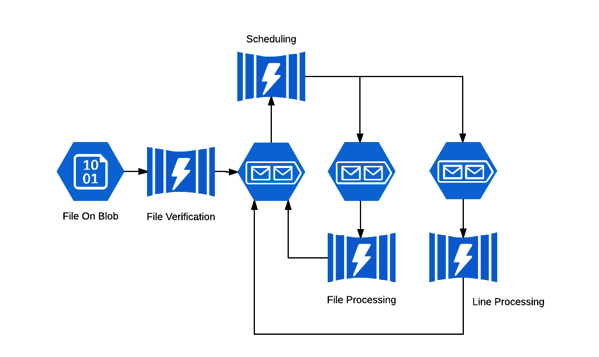

A text file will get dropped in blob storage container, which will trigger a function that will verify that it has contents.

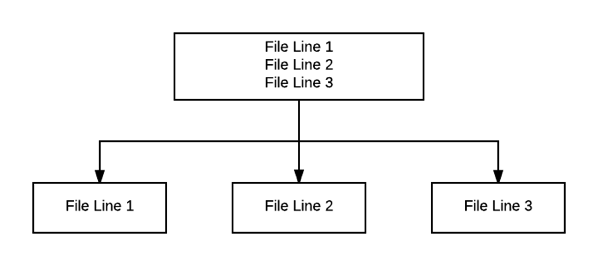

If it does, another function will read the file and split it by line and save each line into separate files.

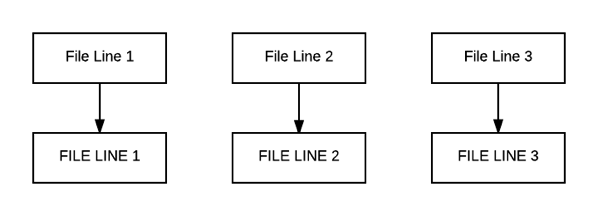

Once the split is done, work will queue up for the another function that will uppercase the contents of each new file.

All of the functions described so far will not enqueue work for other functions. That will be left to yet another function that will handle scheduling of work.

The overall architecture should look something like this:

I should mention that while I’ve used some of these technologies before, some of this is still new to me.

This feels like it should all just work together nicely, but I haven’t really dug in to it yet.

Either way, this will be a bit of fun in figuring out if this is viable or it crashes and burns 🙂

In the next post we’ll take a crack at designing/implementing the first function, the scheduler.